What Is Randomness?

What is meant by randomness? Random processes are very important in lots of areas of maths, science and life in general, but truly random processes are remarkably difficult to achieve.

The BBC Radio 4 programme ‘In Our Time’ looked at the topic of randomness today. The In Our Time website has a link to the programme on the iPlayer, if you missed it first time round.

What is meant by randomness? Well, a truly random event is not deterministic, i.e. it is not possible to determine the next outcome, based upon the previous outcomes, or on anything else.

In actual fact, random processes are very important in lots of areas of maths, science and life in general, but truly random processes are remarkably difficult to achieve. Why should this be the case? Because in theory, many processes that we consider to be random, such as rolling a dice, are in fact deterministic. You could, theoretically, determine the outcome of the dice roll if you knew its exact position, size, etc.

The ancient Greek philosopher and mathematician Democritus (ca. 460 BC – ca. 370 BC) was a member of the group known as Atomists. This group of ancients were the pioneers of the concept that all matter can be subdivided into its fundamental building blocks, atoms. Democritus decreed there was no such thing as true randomness. He gave the example of two men meeting at a well, both of whom consider their meeting to have been pure chance. What they did not know is that the meeting was probably pre-arranged by their families. This can be considered an analogy for the deterministic dice roll: there are factors determining the outcome, even if we cannot measure or control them precisely.

Epicurus (341 BC – 270 BC), a later Greek mathematician, disagreed. Although he had no idea how small atoms really were, he suggested they swerve randomly in their paths. No matter how well we understand the laws of motion, there will always be randomness introduced by this underlying property of atoms.

Aristotle worked further on probability, but it remained a non-mathematical pursuit. He divided all things into certain, probable and unknowable, for example writing about the outcome of throwing knuckle bones, early dice, as unknowable.

As with many other areas of mathematics, the topic of randomness and probability did not resurface in Europe until the Renaissance. The mathematician and gambler Gerolamo Cardano (24 September 1501 – 21 September 1576) correctly wrote down the probabilities of throwing a six with one dice, a double six with 2 dice, and a triple with three. He was the first person to notice, or at least to record, the fact that you’re more likely to throw 7 with 2 dice than any other number. These revelations formed part of his handbook for gamblers. Cardano had suffered terribly because of his penchant for gambling (at times he pawned all his family’s belongings, ended up in a poor house, and in fights). This book was his way of telling fellow gamblers how much they should bet and how to stay out of trouble.

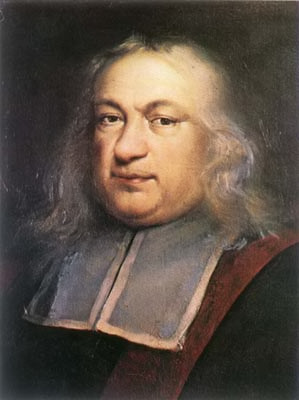

In the 17th century, Fermat and Pascal collaborated and developed a more formalised theory of probability and numbers were assigned to probabilities. Pascal developed the idea of an expected value and famously used a probabilistic argument, Pascal’s Wager, to justify his belief in God and his virtuous life.

Today there are sophisticated tests that can be performed on a sequence of numbers to determine whether or not the sequence is truly random, or if it has been determined by formula, human being, or some other means. For example does the number 7 occur one tenth of the time (plus or minus some allowable error)? Is the digit 1 followed by another 1 one tenth of the time?

An increasingly sophisticated series of tests can be fired into action. We have the “poker test”, which analyses numbers in groups of 5, to see whether there are two pairs, three of a kind, etc, and compares the frequency of these patterns with those expected in a truly random distribution. The Chi Squared test is another statistician’s favourite. Given that a particular pattern that has occurred, it will give a probability, and a confidence level, that it was generated by a random process.

But none of these tests are perfect. There are deterministic sequences that look random (pass all the tests) but are not. For example, the digits of the irrational number π look like a random sequence, and pass all the tests for randomness, but of course, it is not. π is a deterministic sequence of numbers – mathematicians can calculate it to as many decimal places as they please, given powerful enough computers.

Another naturally occurring, seemingly random distribution is that of the prime numbers. The Riemann Hypothesis provides a way to calculate the distribution of the primes, but it remains unsolved and nobody knows whether the hypothesis remains valid for very large values. However, like the digits in the irrational number π, the distribution of the primes does pass all the tests of randomness. It remains deterministic, but unpredictable.

Another useful measure of randomness is a statistic called the Kolmogorov Complexity, named after the 20th century Russian mathematician. The Kolmogorov Complexity is the shortest possible description of a sequence of numbers, for example the sequence 01010101…. could be described simply as “Repeat 01”. This is a very short description, indicating the sequence is certainly not random.

However, for a truly random sequence, it would be impossible to describe the sequence of digits in any simplified form. The description would be just as long as the sequence itself, which indicates that the sequence would appear to be random.

During the last two centuries, scientists, mathematicians, economists and many others have begun to realise that sequences of random numbers are very important to their work. And so in the 19th century, methods were devised to generate random numbers. Dice, but can be biased. Walter Welden and his wife spent months at their kitchen table rolling a set of 12 dice over 26000 times, but these data were found to be flawed because of biases in the dice, which seems a terrible shame.

The first published collection of random numbers appears in a book of 1927 by Leonard HC Tippet. After that, there were many attempts, many flawed. One of the most successful methods was that used by John von Neumann, who pioneered the middle-square method, in which a 100-digit number is squared, the middle 100 digits are extracted from the result, and squared again, and so on. Very quickly, this process yields a set of digits that pass all the tests of randomness.

In the 1936 US presidential election, all the opinion polls pointed to a close result, with a possible win for the Republican Party’s candidate Alf Landon. In the event, the outcome was a landslide to the Democratic Party’s Franklin D Roosevelt. The opinion pollsters had chosen bad sampling techniques. In their attempts to be high-tech, they had telephoned people up to ask them about their voting intentions. In the 1930s, it was far more likely for wealthier people – largely Republican voters – to have a telephone, and so the results of the surveys were deeply biased. In surveys, truly randomising the sample population is of prime importance.

Likewise, it is also very important in medical tests. Choosing a biased sample set (e.g. too many women, too many young people, etc.) can make a drug appear more or less likely to work, biasing the experiment, with possibly dangerous consequences.

One thing is certain: humans are not very good at producing random sequences and they are not very good at spotting them either. When tested with two patterns of dots, a human being is particularly bad at deciding which pattern has been generated at random. Likewise, when trying to create a random sequence of numbers, very few people include features such as digits occurring three times in a row, which is a very prominent feature of random sequences.

But is there anything truly random? Going back to the dice we considered at the start, where a knowledge of the precise initial conditions would have allowed us to predict the outcome, surely this is true of any physical process creating a set of numbers.

Well, so far, atomic and quantum physics have come closest to providing us with truly unpredictable events. It is, to date, impossible to determine precisely when a radioactive material will decay. It seems random, but maybe we simply don’t understand. At the moment, it remains probably the only way to generate truly random sequences.

Ernie, the UK Government’s premium bond number generator, is now on its fourth reincarnation. It must be random, in order to give all the country’s premium bond holders an equal chance of a prize. It contains a chip that exploits the thermal noise within itself, i.e. the amount of movement in the electrons. Government statisticians perform tests of the number sequences that this generates, and they do indeed pass the tests for randomness.

Other applications are: the random prime numbers used in internet transactions, encrypting your credit card number. The National Lottery machines use a set of very light balls and currents of air to mix them up, but like the dice, this could, in theory, be predicted.

Finally, the Met Office uses sets of random numbers for its ensemble forecasts. Sometimes it is difficult to predict the weather because of the well-known “chaos theory” – that the final state of the atmosphere is highly dependent on the precise initial conditions. It is impossible to measure the initial conditions to anything like the precision required, so atmospheric scientists feed their computer models various different scenarios, with the initial conditions varying slightly in each. This results in a set of different forecasts and a weather presenter who talks in percentage chances, rather than in certainties.

See also: In Our Time.

luke@mathsbank.co.uk http://mathsbank.co.uk/

Article Source: What Is Randomness?